Harness Vibe Research 📟

TL;DR — Full research autonomy will happen — but it won’t come from a pre-built product. It will emerge from your own harness: a system that absorbs your judgment, encodes your taste, and compounds with every run. The question is not whether this shift is coming. The question is whether you are building the harness to grow with it.

More autonomy alone often increases entropy. Too little AI integration creates almost no leverage. The real multiplier is harness design: how humans and agents share context, verification, and control.

The signal is already here

This shift is not theoretical. Multiple teams in different settings are reporting the same pattern:

- Sakana AI reported AI Scientist v2 and discussed both progress and quality limits in autonomous paper-generation workflows.

- Google shared Gemini Deep Think results on open math problems and shipped AI co-scientist — a multi-agent system on Gemini 2.0 with self-critique, Elo-based ranking, and human-in-the-loop design. Its drug repurposing and target discovery hypotheses were experimentally validated in the lab.

- Orchestra demonstrated an ACL submission timeline built with an AI co-scientist workflow.

- Open ecosystems such as K-Dense and Biomni are treating reusable “skills” and know-how libraries as core research infrastructure, not optional add-ons.

- Even at the execution layer, Anthropic’s Programmatic Tool Calling is rethinking how agents compose actions — optimizing for token efficiency and multi-step orchestration.

Different institutions, different domains, same direction: people are shipping systems, not just demos.

What coding agents already taught us

Before research agents, coding agents already exposed the key lesson.

OpenAI publicly described building large production systems with AI-generated code and emphasized environment and harness design as the engineering bottleneck.

LangChain has shown that major benchmark gains can come purely from harness upgrades: self-verification loops, better context injection, loop guards, and reasoning budget control. Same base model class, better orchestration. See this blog.

The capability jump came from system design, not from a larger model release.

The autonomy paradox

There is a natural instinct to push to full automation: remove humans and let agents run end to end.

But in research, higher autonomy frequently increases drift risk:

- agents can optimize for easy novelty instead of meaningful questions,

- outputs can look polished while missing key checks,

- small errors compound across long execution chains.

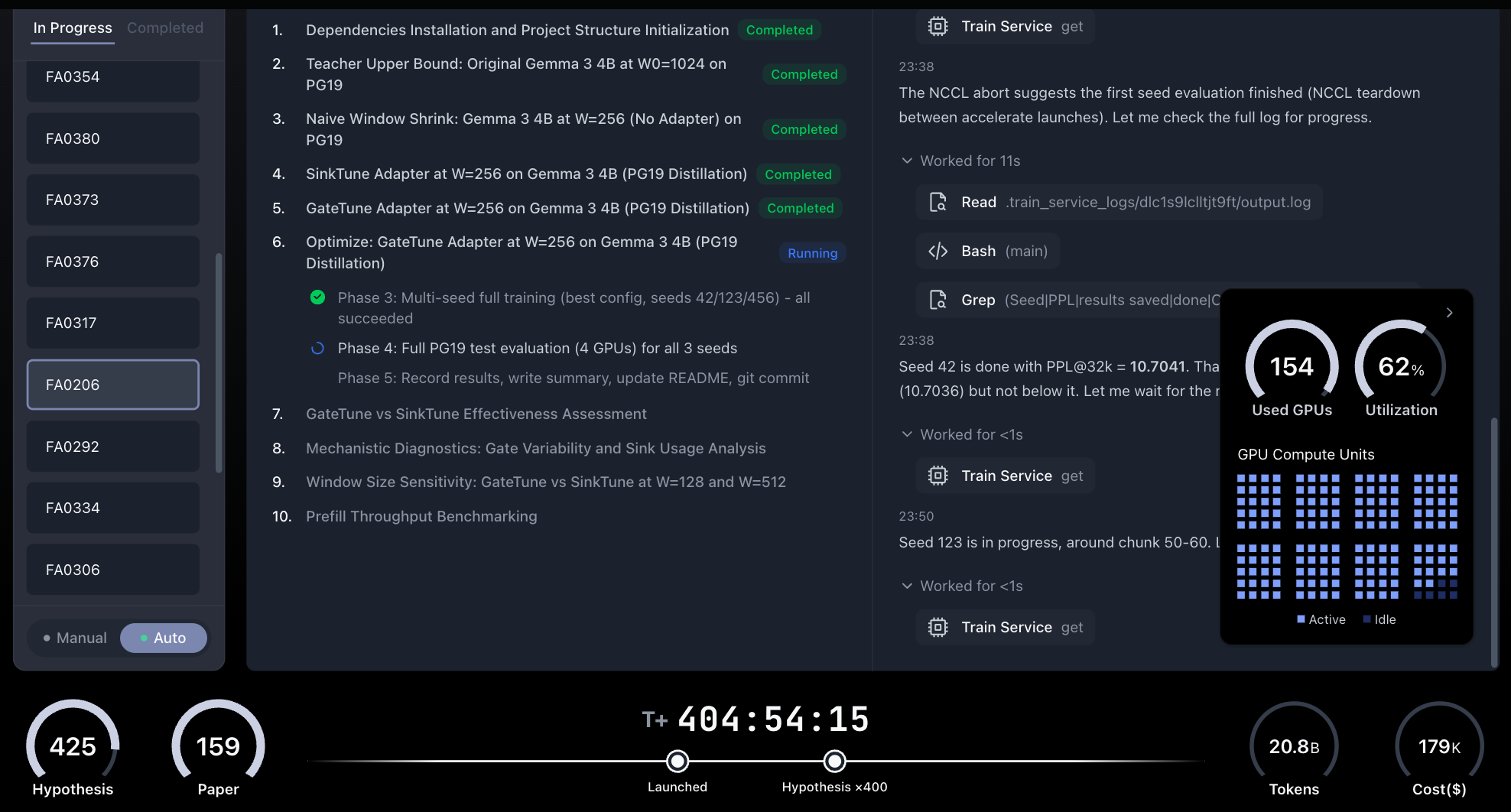

Several autonomous research systems have openly acknowledged this. Analemma AI’s FARS has now been running continuously for 400+ hours, consumed 20.8 billion tokens (~$179K), generated 425 hypotheses and produced 150+ papers. Earlier in the run, the first 100 papers scored 5.05 on ICLR review standards — above the average human submission (4.21) but below the acceptance line (5.39). Consistent quantity, stable quality, but no breakthrough. Human review remains mandatory before publication.

FARS livestream dashboard — 404 hours, 159 papers, 425 hypotheses, 20.8B tokens, $179K cost, 154 GPUs at 62% utilization

The opposite extreme also fails. If AI is only autocomplete attached to an unchanged workflow, it speeds typing but does not change research throughput or insight quality.

The Autonomy Paradox — Full Automation creates chaos, No AI creates exhaustion, Harness is the sweet spot

So the design problem is not autonomy maximization. The design problem is controllable compounding.

Harness, not workflow

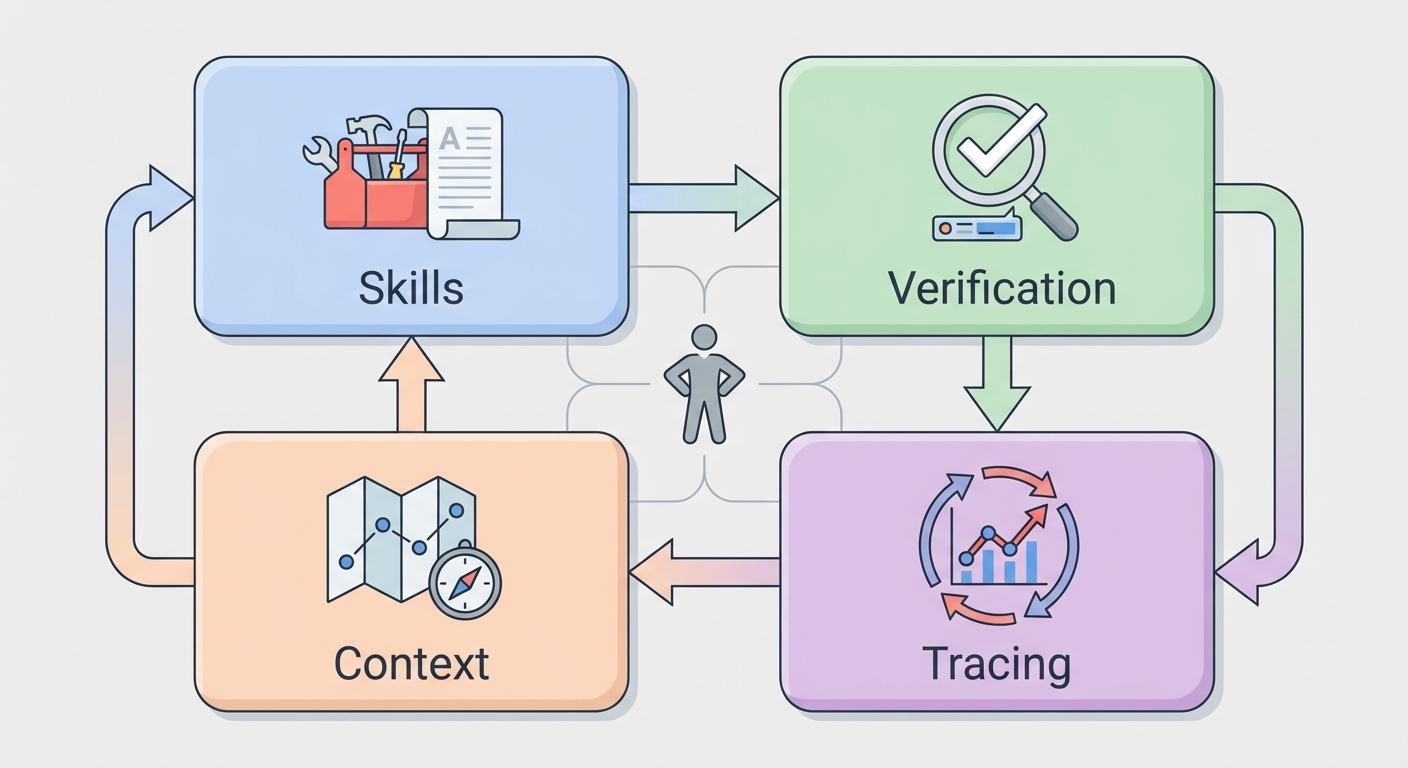

A workflow is a sequence of steps. A harness is a coupling layer between human intent and AI execution.

A good research harness is not rigid. It is adaptive and inspectable, with clear handoff points between person and agent.

Operationally, this means four concrete components:

Skills as reusable domain procedures Encode expert know-how in structured artifacts: what to test, which tools to call, what evidence thresholds to use, and how to interpret failure.

Verification loops tied to hypotheses Require each run to check claims against the original question, edge cases, and relevant prior literature before outputs are accepted.

Context engineering as an environment checklist Define available data, tools, constraints, and success criteria up front so the agent reasons inside the real research boundary.

Tracing plus iteration Capture run traces, inspect failures, and update the harness. Treat every run as training data for the system design itself.

Four components of a research harness — Skills, Verification, Context, Tracing — with the researcher at the center

When these four pieces are in place, quality improves because the system becomes easier to debug, not because the model “became smarter” overnight.

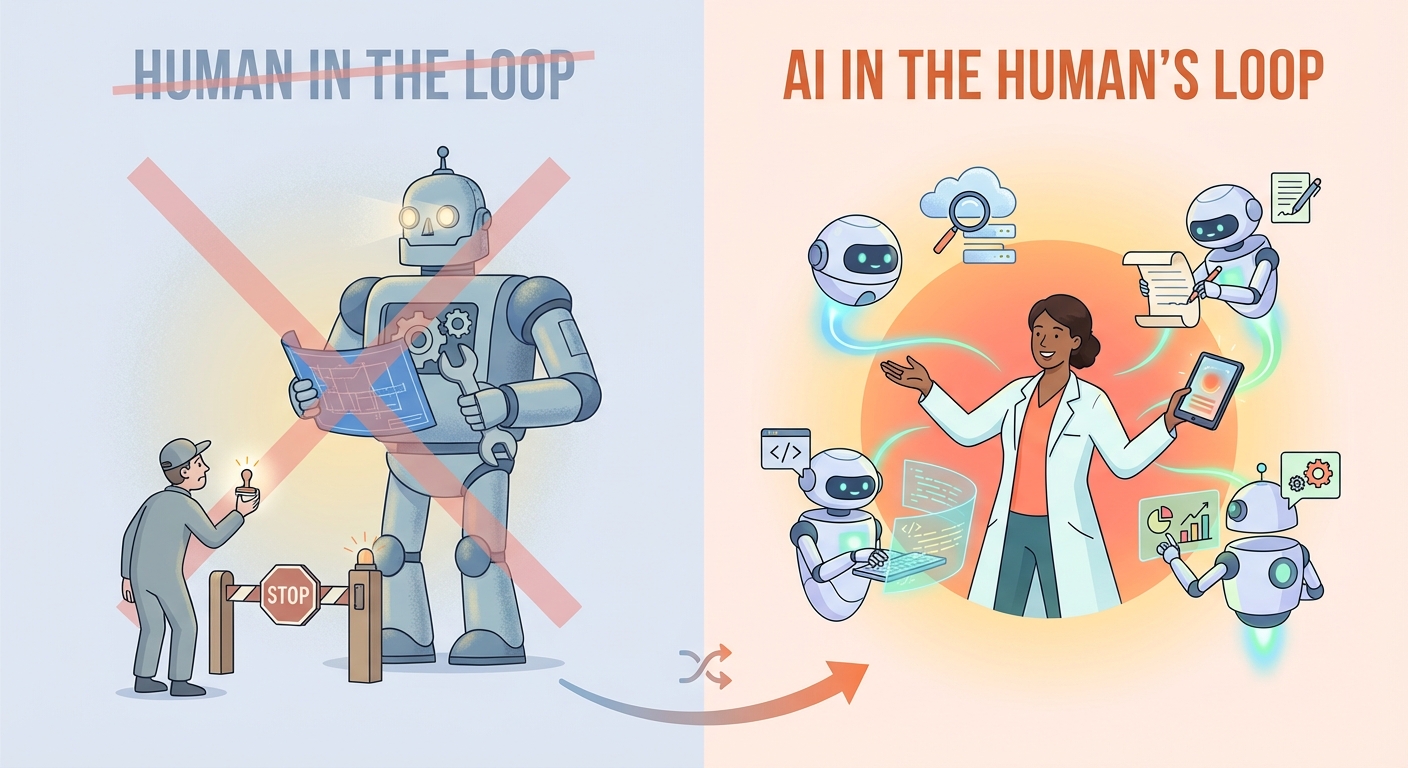

AI in the human’s loop

“Human in the loop” often implies AI is the main actor and people are safety checkpoints.

For research, a better framing is AI in the human’s loop.

The human owns direction, taste, and significance judgments. The AI expands search, drafts alternatives, and accelerates execution.

A practical division of labor:

- Human chooses the question; AI maps the possibility space.

- Human decides what is meaningful; AI runs and refines candidate paths.

- Human owns the published claim; AI supports drafting, formatting, and reproducibility packaging.

Paradigm shift — from “Human in the Loop” to “AI in the Human’s Loop”

These decisions are not arbitrary. A researcher operates under real constraints — limited funding, finite compute, a specific disciplinary background, access to particular platforms and collaborators. What looks like “taste” is actually constrained optimization: choosing the best move given the resources at hand. That is precisely why the human must stay in the driver’s seat — no model has access to these constraints.

Human oversight in this model is O(1) — a constant-cost operation that grounds the entire system, regardless of task complexity.

This is not semantics. It changes architecture, evaluation, and team roles.

Completeness over perfection

Many teams perfect one stage — usually generation or writing — while leaving the rest manual and fragile. But research is a cycle: ideation → experiment design → execution → analysis → writing → revision. A break anywhere stalls everything.

An imperfect but complete harness usually outperforms a perfect partial tool, because researchers can intervene at any node and keep the full cycle moving. Phylo’s Biomni and its Know-How Library already prove this: lifecycle coverage beats single-stage depth.

Three steps you can start today

The core idea: encode your research taste into the harness. Let the harness drive the model. You drive the vibe research.

Talk to your system. Encode your taste as a Skill. Pick one workflow you repeat every week — baseline replication, literature comparison, whatever it is. Instead of running it yourself, sit down and tell your agent: here is how I judge quality, here are the signals I watch, here is where I pause to think. Write that into a Skill file. You are turning your research intuition into an executable asset.

Give the system authority, but bind it to verification. Let the agent run autonomously — but require it to answer two questions before delivering results: does this address the original hypothesis? Is there an edge case being ignored? You do not need to watch every step. You just need a gate at the exit. Trust the system, but verify the output.

Review traces. Evolve your harness. Keep run logs. After every run, ask: where did the agent get stuck? Which Skill was underspecified? Update one rule, one context block, or one verification step. Each iteration compounds — your harness absorbs more of your judgment every cycle.

Through this loop of encoding, delegating, and refining, you will notice something shift: it is not that the model got smarter — it is that your system became more like you.

Build the soil now

This is not just about us. In K-12 education, the harness revolution is already here. Alpha School replaced classroom lectures with AI tutors and turned teachers into motivational guides — their students spend only two to three hours a day on academics yet consistently score in the 99th percentile. Harvard found that students learning physics with an AI tutor outperformed those taught by a professor. The coupling of human guidance and AI execution is already surpassing traditional human-only performance in education. Research is next.

These students have not grown up yet. But when they do, they will arrive in labs and research groups expecting human-AI coupling as the default — because it is all they have ever known. If the research world has not built its own harness infrastructure by then, it will be the system that is behind, not the people. That is why we need to start now: not just to accelerate our own work, but to cultivate the soil so the next generation of researchers inherits compounding, not a cold start.

The tools are ready — the harness gap remains

The ecosystem already has most building blocks: reusable skills, harness engineering practices, multi-agent orchestration frameworks, and programmatic tool calling. Tools like Deep Agents already provide the agent harness infrastructure — planning, context management, sub-agent delegation, persistent memory — built on Langchain.

What is still missing in many teams is the integration layer that keeps humans and agents in tight, high-frequency collaboration.

We are excited to see — and contribute to — an open-source research harness built on these principles. More soon.

Citation

If you found this useful, please cite it as:

Zhang, X. "Harness Vibe Research" (March 2026). https://x-izhang.github.io/blog/vibe-research/

Or use the BibTex citation:

@article{zhang2026harnessviberesearch,

title = {Harness Vibe Research},

author = {Xi Zhang},

year = {2026},

month = {March},

journal = {x-izhang.github.io},

url = {https://x-izhang.github.io/blog/vibe-research/}

}